For decades, the premise of technical documentation was clear: humans write it and humans read it. That assumption shaped every decision, including how content was architected, structured, and translated. It also determined how content was delivered through templated documents, portals designed around user journeys, branding, and UX design.

That premise is being rewritten.

AI agents are emerging as the dominant consumers of technical content. In some organizations, this shift is already underway; in others, it is only months away. Either way, the audience that technical writers have always written and optimized for will no longer be the primary one.

Therefore, technical documentation teams must examine whether their documentation and infrastructure are built to serve AI agents. If they aren't, then they must rethink their approach to documentation creation, content delivery, and knowledge management.

The new era of AI that can plan, act, and adapt

This pivot started in 2025 with the rise of agentic AI. By late 2025, 23% of McKinsey survey respondents reported that their companies were already scaling agentic AI systems in at least one business function.

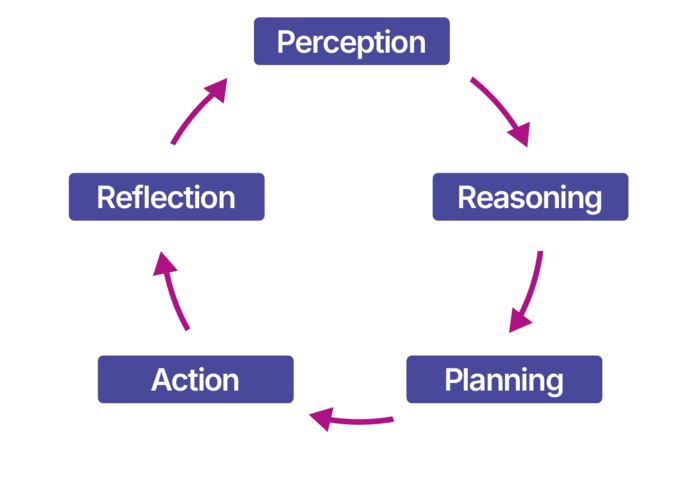

This technology has a high return potential as it integrates reasoning, planning, and action. Specifically, agentic AI refers to Artificial Intelligence systems designed to act autonomously. AI systems can plan, make decisions, and execute complex sequences of actions without necessarily being triggered by someone. The result is a system that proactively accomplishes goals across digital tools and environments with limited human involvement.

Agentic workflows start with the “perception” phase. Here, an agent is triggered by information from sensors, database changes, or another stimulus, like a human command. Next comes “reasoning” performed by Large Reasoning Models (LRMs). LRMs are a new kind of LLM specifically trained for “thinking”. While reasoning, these models build plans for how to achieve their goals. The agent then acts, rather than suggesting a process to a human. Finally, agents review outcomes to improve future results.

Line of business (LOB) applications have integrated agentic workflows into their solutions. For example, CRM solutions embed AI Sales Development Representatives that manage full prospecting cycles and help desk tools use AI agents to resolve user tickets.

Agentic AI for cross-domain use cases

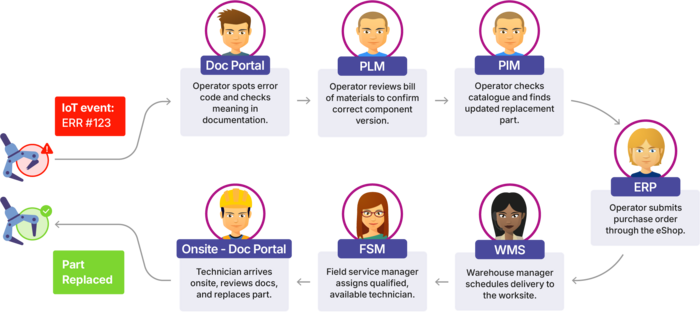

The real game-changing application of agentic technology is in cross-domain use cases. When teams must jointly navigate a complex problem, it requires several stakeholders interacting with many independent systems. For example, in a typical service procedure, even a simple process becomes tedious and complicated when it involves iterations with specialists and their different solutions.

These steps are essential, yet their mechanical nature makes them good candidates for automation.

Until now, the only way to automate this process was to script the steps and interactions with each application. However, processes like these with high degrees of variability are difficult to code. Teams must manage potential unexpected events that could break the script and add complexity (e.g., part is out of stock, shipping is delayed, technician is sick on the scheduled procedure day, etc.). The result is an incredibly high-maintenance system that requires the upkeep of thousands of lines of bespoke code. This solution is unsuitable for such substantial variations in parameters and conditions. The manual work required to write code and manage the errors would offset the anticipated time and effort savings.

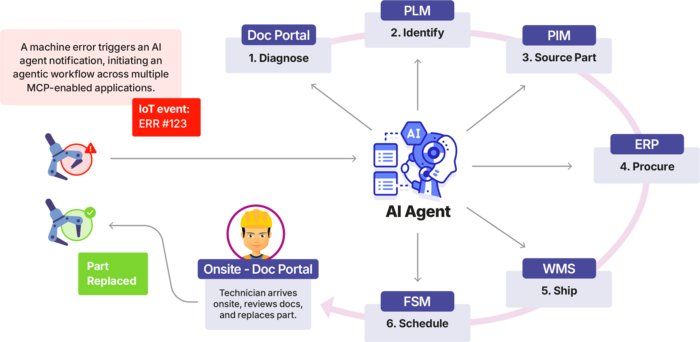

Now, with agentic AI, a workable solution finally exists. Teams can simply write a plain text description of their process, and the AI agents will talk to each system to manage the full workflow. This is faster, easier, and cheaper to maintain than a system of bespoke code.

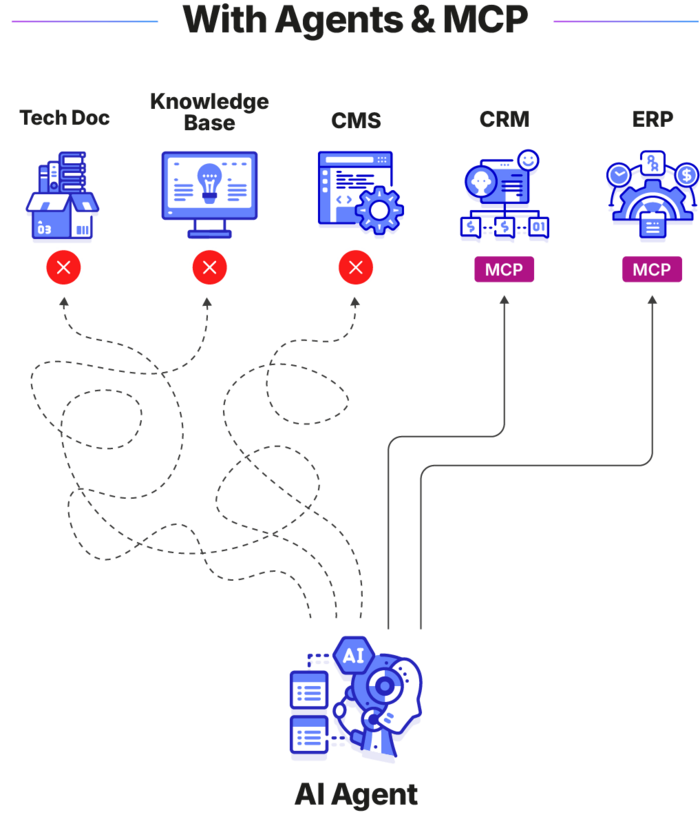

Agentic systems and LLMs use Model Context Protocol (MCP) servers to communicate with documentation and other applications. MCP is a universal, open standard. It allows AI systems to connect to any external application wrapped in an MCP server to gather information and perform actions. |

Common problems blocking agentic workflows

Understanding these fundamentals lays the foundation for addressing core challenges in successfully deploying Agentic AI.

Lack of MCP readiness

Applications must be MCP-capable for their information to be accessible to AI agents. This is already the case for large LOB applications like help desks and CRMs, making them well-suited for AI integration.

The challenge lies with informally organized content residing in files and various repositories. From shared drives to Enterprise Content Management (ECM) systems, wikis, and CCMSs, many sources lack MCP enablement, making the information inaccessible to agentic systems. Therefore, when companies build agentic systems, entire groups of knowledge are left out as LLMs/LRMs will ignore non-MCP-enabled applications and their content. This creates blind spots for AI, leading agents to introduce errors and incomplete information into workflows.

Scattered knowledge

Even if these disparate systems and repositories were MCP-enabled, they would create confusion and errors among agents. This is because, when information is scattered, agentic systems don’t know which application to communicate with for authoritative accuracy.

Similarly to how humans rely on subject matter experts for specific inquiries, agents need a system of reference for each field or business domain. This is usually in place for core enterprise systems – Customer Relationship Management (CRM) for customer data, Enterprise Resource Planning (ERP) for HR and finance – that serve as identifiable systems of record for a given domain. However, fragmented content is difficult to organize into clear canonical references. Without unified, domain-specific knowledge, AI agents cannot reliably ground their answers in a single source of truth. For example, if an agent is presented with ten different applications hosting product knowledge, it would only consider a random subset of them and miss critical information. Therefore, knowledge must first be unified by business domain into an MCP-enabled reference system.

Content isn’t fit for AI

Writers often prepare content for human audiences in formats like PDFs and HTML, but these formats are not adapted for an AI audience. They do not maintain any granularity or tags that may have existed in the original source content, before applying formatting for the human eye. Most content is therefore available as monolithic documents that lack context and include visual indicators that are, at best, unnecessary for AI agents and, at worst, misleading to AI understanding. Altogether, these create hallucinations when consumed and interpreted by AI.

Three steps to making content fit for AI

As organizations adopt Agentic AI, technical writers must get ready by

- preparing new content for AI,

- unifying documentation,

- and keeping ownership of the content while making it available through MCP.

Step 1: Write more

Yes, you read that correctly. In recent years, technical documentation teams feared being replaced by AI, but in truth, businesses need the opposite. Companies need more resources and writers allocated to documentation teams to make the following three content adjustments:

- Surface the implicit. The LLM in the agentic system isn’t as domain-savvy as your clients, technicians, and agents. Humans have implicit knowledge from lived experiences and technical understanding, so documentation doesn’t include obvious and minute details. Since machines lack these implicit understandings, they often struggle to interpret context. Domain-specific information and knowledge specific to your company are especially unknown to LLMs and must be surfaced.

- Document the “why” and not just the “how”. Most documentation explains how things work: how products are built, how to follow procedures, how to achieve specific goals, and more. However, when LLMs query content, they often start with higher-level questions about why certain steps and procedures are required. Writers must fill in the content gaps to create a bridge from the AI’s query intent to the documentation.

- Use internal, expert knowledge to bolster content. Each company has vast collections of valuable knowledge scattered inside the brains of subject matter experts, developers, and support agents. Either through expert interviews or expert-led first drafts, technical writers must extract and document this information. These insights and additional context help AI systems make better, more informed decisions.

By implementing these content recommendations, you will augment your documentation with new, detailed expert knowledge and domain information to provide context for LLMs. Since AI systems are the audience for this added content, rather than humans, it radically simplifies the content creation process.

This knowledge doesn’t have to be managed like traditional documentation. Human-focused content is written in richly semantic formats like DITA, but many of these formats are intended for implementing human readability and delivery. Meanwhile, additional content written solely for AI can remain in flat text as long as it still has granularity and metadata. The most important part is that the information exists to provide additional context. AI doesn’t need the same semantic or XML computing tags that render a complex, yet visually interesting document for humans. This radical change to content creation is possible because AI can easily ingest and understand many pages of dense text without the visual cues needed for human focus.

Instead, teams can prepare content for AI in formats like Markdown, with the ability to add metadata tags in each section of content and create separate, granular files.

Step 2: Unify and prepare the content

While your documentation may be comprehensive and fit for AI understanding, this won’t mean anything if agentic systems can’t find it. Navigating a siloed landscape is manageable yet painful for humans, but disastrous for AI agents. Technical documentation teams must create a single, centralized source of authoritative product knowledge to help ground agents in a reliable source of truth. This means gathering product content from across sources (i.e., CCMS, LMS, wikis, SharePoint, etc.) into a single knowledge repository. It also means restructuring diverse formats to unify them and then enriching the content, so it is optimized for AI.

Product documentation readiness is more critical for scaling Agentic AI than model sophistication. Technical writers must ensure knowledge is organized, governed, and accessible for AI workflows. The easiest way to achieve this is by building a unified repository of content optimized for AI that serves as the referential authority on product knowledge.

Step 3: Control ownership while making content MCP-ready

Creating this repository to unify content across sources may sound simple, but teams should refrain from building their own platform. What looks like a fun project can rapidly turn into a rabbit-hole nightmare and a liability. Would you develop your own CRM or ERP? No. So why would you build a knowledge management platform? Gone is the time of pilots and home-grown developments for experimenting with trendy, but ultimately irrelevant, approaches.

Successful Agentic AI requires enterprise-grade products that combine validated and up-to-date technology (which is changing at an unprecedented pace), test and validation methodologies, and processes for execution and monitoring.

IT and agentic developers don’t need full access to your content. They just need to use it. Instead of dumping your content somewhere or giving it away for IT to manage, you need a solution that allows any team to connect to your documentation for AI workflows via MCP. Invest in a dedicated knowledge solution that seamlessly integrates with your existing content sources, that offers access rights management, content governance, and content quality control, and that is MCP-enabled. The key is to own your technical documentation.

Takeaways

As Agentic AI expands, technical documentation teams must change their perspectives on why and how to write content. This shift is inevitable and has already started for early adopters. Each team will need to consider which new AI use cases their company wants to implement. Based on this, they can determine which information the systems will need and update their content for AI understanding accordingly.

On the infrastructure side, teams shouldn’t waste time trying to build their own unified repository solution. Instead, they should buy a ready-to-use, domain-specific solution that is MCP-capable. For an authoritative source of product knowledge, gateway solutions like Product Knowledge Platforms offer the AI-native capabilities that your team needs to start designing agentic workflows.